The Lumper Problem: How AI Is Quietly Narrowing Human Thought

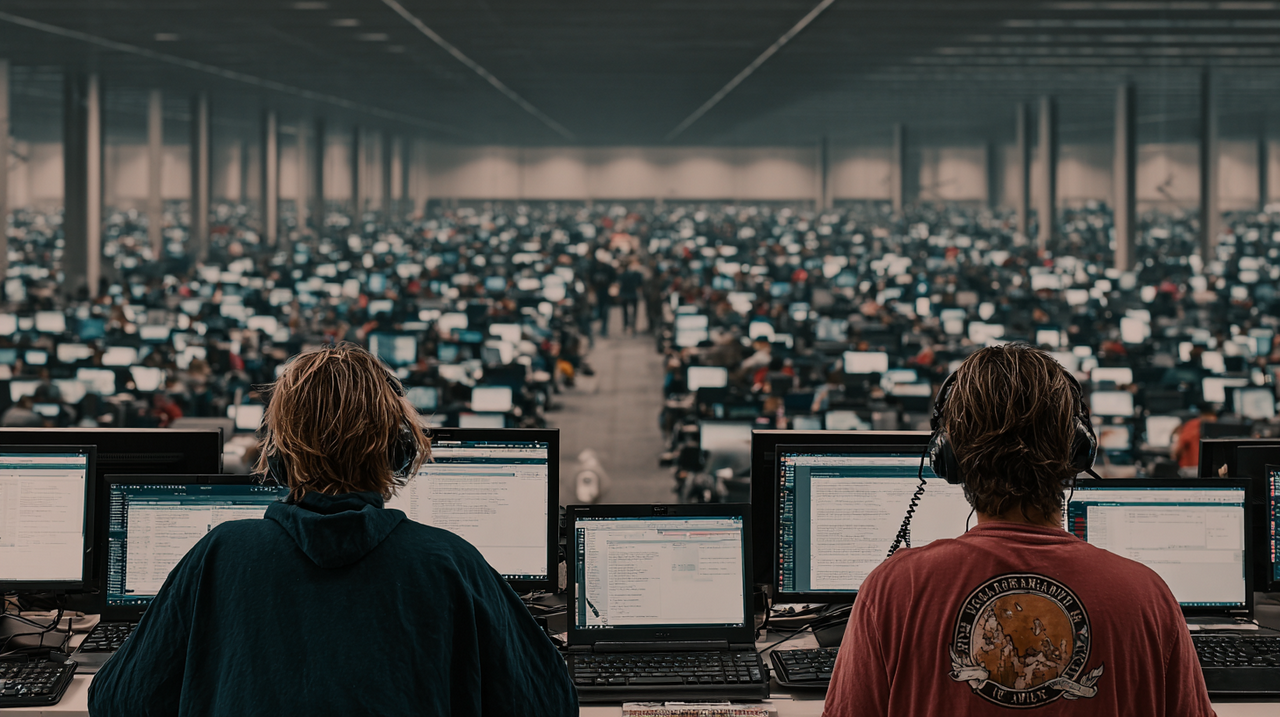

On the morning of 9 April 2026, a small miracle of coordination is unfolding in the cognitive infrastructure of the planet.

A graduate student in Hyderabad is asking Claude how to tighten the argument in a paper on monetary policy. A copywriter in São Paulo is feeding ChatGPT the bullet points for a pitch deck. A civil servant in Warsaw is asking Gemini to draft a consultation response on housing density. A novelist in Lagos wants to know whether her second chapter drags. A thirteen-year-old in suburban Ohio is asking an assistant, any assistant, whether she should reply to a text from the boy she likes.

None of them know each other. None of them are writing about the same thing.

And yet the sentences they are about to produce will share more DNA than any comparable population of human sentences has shared since the King James Bible standardised written English in 1611. The cadences will be familiar. The rhetorical scaffolding will be familiar. Tactful three-point framing, tentative fourth consideration, breezy affirming close. Certain adjectives will recur at a frequency no unassisted population of writers has ever produced. And certain ideas, once prominent, will be faintly audible or missing entirely, as if someone had quietly removed a frequency from the signal.

A paper circulating on arXiv in early 2026 calls this, with characteristic academic understatement, “algorithmic monoculture.”

The term is not new. Jon Kleinberg and Manish Raghavan introduced it in the Proceedings of the National Academy of Sciences in 2021, back when it still functioned mostly as a warning about hiring software and credit-scoring systems. The newer work expands the frame. It argues that the rise of large language models, trained on overlapping corpora, fine-tuned using near-identical methods, and optimised against a suspiciously similar set of human preferences, has produced something the world has not previously had to reckon with: a planetary-scale cognitive layer that is simultaneously almost invisible to individual users and profoundly consequential, at the population level, to the diversity of human thought.

The individual-level invisibility is the interesting part.

Walk up to any one of those users and ask them whether the AI is helping. They will say yes. The assistant is responsive. The writing is better than what they would have produced alone. The code compiles. The email hits the right tone. The student understands monetary policy now in a way she did not understand it at breakfast. Each interaction is, in isolation, a small gift.

And it is precisely because the interactions are small, isolated gifts that the aggregate effect is so hard to see. There is no aggrieved party. There is no victim. There is only the slow, statistical narrowing of the range of things that get written, thought, proposed, rejected, tried, and considered.

The monoculture does not feel like a monoculture from inside it. It feels like being helped.

The Paper That Said the Quiet Part

The arXiv paper, and the broader cluster of early-2026 work around it, does something previous contributions in the literature mostly refused to do. It tries to estimate the thing that is being lost.

The headline result is simple. When a representative multilingual sample of fifteen thousand human respondents from five countries is asked to produce preference rankings across a standard battery of open-ended questions, and the same battery is put to twenty-one leading language models, the models collectively occupy a region of preference space that covers roughly forty-one per cent of the range humans span.

The other fifty-nine per cent is not underrepresented. It is absent.

That finding is in line with a string of earlier results that, taken together, amount to something closer to a verdict. A 2024 study in the Cell journal Trends in Cognitive Sciences found that co-writing with any mainstream LLM, regardless of which company trained it, produced sentences whose stylistic variance collapsed towards a common centre within a handful of exchanges. A large-scale analysis of fourteen million PubMed abstracts by researchers at Tübingen, first published in 2024 and updated in 2025, documented a sudden surge after November 2022 in the frequency of a small, stable set of “LLM preferred” words: delve, intricate, showcasing, pivotal, underscore, meticulous. In some sub-corpora, more than thirty per cent of biomedical abstracts now carry the linguistic fingerprint of having passed through a chatbot.

A separate working paper measured writing convergence in research papers before and after ChatGPT's release. Early adopters, male researchers, non-native English speakers, and junior scholars moved their prose fastest and furthest towards the model mean.

The people who most needed the help were the ones whose voices changed the most.

Something similar is happening in creative domains, although the evidence is messier. The Association for Computing Machinery's 2024 conference on Creativity and Cognition published a paper whose findings most researchers in the area now treat as foundational: ask humans to generate divergent-thinking responses to open prompts, and you see the expected long-tail distribution of weird, bad, brilliant, and unclassifiable answers. Ask an LLM the same, and you get a narrower, tighter, more plausibly-competent set of responses.

On average, the LLM does well. At the population level, it produces far less variety than a comparable population of humans.

The authors used the phrase “homogenising effect on creative ideation” and meant it literally. Other groups have pushed back, arguing that the picture is more complicated and that sampling choices matter. The disagreement is real. The overall direction of drift is not really in dispute any more.

How the Narrowing Happens

To understand why the drift is happening, it helps to dispense with two stories.

The first is that the models have a secret aesthetic they are imposing on us. They do not. The Midjourney look and the ChatGPTese voice are not creative preferences in any meaningful sense. They are artefacts of the training and tuning pipeline.

The second is that the problem is a handful of frontier labs colluding to produce bland output. They are not colluding. They are doing the same thing independently because the gradients of the problem push everyone towards the same hill.

The first gradient is the training data. A language model is, in the end, a statistical compression of a corpus. If you scrape Common Crawl, Wikipedia, the major English-language book collections, StackExchange, Reddit, GitHub, and a handful of licensed newspaper archives, you will end up with a corpus that overlaps by perhaps seventy or eighty per cent with anyone else's scrape of the same substrate. There are differences around the edges, a bit more Chinese here, a bit more code there, a different cut-off date, but the overall shape is remarkably stable across labs. Dolma, The Pile, RedPajama, C4, FineWeb: each is an attempt to produce a general-purpose training corpus and each contains a broadly similar cross-section of publicly available human text.

Models trained on such substrates are already close to each other before any tuning happens. They have been fed from the same trough.

The second gradient is reinforcement learning from human feedback. This is the technique that turned eerily capable text continuation engines into the compliant, helpful assistants that five hundred million people now use daily. The idea is simple. Present humans with pairs of model outputs, ask which is better, train a reward model on those preferences, then use the reward model to fine-tune the base model. The result is a system shaped, gradient step by gradient step, to produce answers humans in the labelling pool tend to approve of.

The problem is that humans in the labelling pool, particularly professional labellers working through the contract platforms the frontier labs use, develop remarkably consistent tastes. They prefer answers that are structured, polite, hedged, comprehensive, and written with a faint institutional politeness most people would recognise as American corporate email register. They dislike answers that are rude, uncertain, fragmentary, idiosyncratic, strange.

None of this is their fault. It is a predictable consequence of asking a few thousand people to impose ratings on millions of responses. You get the average of their tastes. Not the span.

The third gradient is optimisation itself. Reinforcement learning, by its nature, pushes policies towards the highest-scoring actions available. Apply it to language generation and the model concentrates its probability mass on outputs that reliably score well. Researchers call this “mode collapse,” a phrase borrowed from the generative adversarial network literature, and the phenomenon has been documented so many times in RLHF pipelines that it is considered standard. A 2024 ICLR study measured the effect and found that post-RLHF models exhibited “significantly reduced output diversity compared to SFT across a variety of measures,” with the authors explicitly framing this as a tradeoff between generalisation quality and the breadth of the response distribution.

In plain English: the models get better at the average task and worse at producing a range of answers to any one task. They converge on the plausible-sounding centre.

The fourth gradient is feedback from deployment. Once a model is serving production traffic, the telemetry from its users shapes the next round of training. Responses users rate up are preferred. Responses users regenerate or abandon are suppressed. And the users, naturally, have been trained on earlier outputs of the same models.

They prefer things that look like what they have come to expect. Within a few cycles, the distribution of acceptable responses narrows further, and the aesthetic the model produces becomes the aesthetic its users demand, which becomes the aesthetic the model produces.

The loop closes.

This is the mechanism by which “the ChatGPT look” became a recognisable category in 2023, stabilised through 2024, and was operating as a near-parody of itself by late 2025. It is a statistical attractor in the feedback graph.

The Ghost in the Text

If you want to see the monoculture in the wild, you do not have to look very hard.

The Tübingen paper on PubMed abstracts is the most quantitatively damning evidence, and the excess-vocabulary methodology used there has since been applied to other corpora with consistent results. News writing, marketing copy, policy consultations, customer support macros, cover letters, LinkedIn posts. Every corpus where people write under time pressure shows the same tell-tale vocabulary surge. A 2025 study testing English news articles for lexical homogenisation found some metrics moving and others holding steady, a useful corrective against overclaiming. But nobody is now arguing that writing on the open web looks the same in 2026 as it did in 2021.

The visual domain is noisier, partly because the models change faster and partly because creative industries have aggressively developed counter-aesthetics. The “Midjourney look,” a recognisable stew of moody lighting, glassy skin, hyper-saturated background bokeh, and compositions that feel vaguely cinematic without belonging to any specific film, became so pervasive in 2023 and 2024 that stock photography buyers began filtering it out as a separate category. Professional illustrators and art directors responded by prompting against it, fine-tuning custom models, and, in some cases, branding human-made work as “not AI” the way food manufacturers brand their products “not GMO.”

The counter-movement has produced some of the more interesting visual culture of the last two years. It exists in reaction to a monoculture it did not create.

In software, the convergence is more measurable. The major coding assistants, GitHub Copilot, Cursor, Anthropic's Claude Code, Google's Gemini Code Assist, now write or materially influence something on the order of forty per cent of the code committed to open-source repositories, and a higher share of new code inside large enterprises. They do this against a training substrate that is itself overwhelmingly composed of previously-written open-source code. The result is a global convergence on a narrow set of idioms: particular naming conventions, particular error-handling patterns, particular library choices.

Experienced engineers report the strange sensation of reading a new codebase and recognising the model's fingerprint before they can identify the author's.

Hiring is perhaps the clearest case of Kleinberg and Raghavan's original concern becoming literal. By the time a candidate's CV reaches a human reviewer at a Fortune 500 firm in 2026, it has typically passed through multiple LLM-based screening layers. The screening models are fine-tuned on labelled examples of “good” and “bad” candidates, and the labels come from a small number of vendors whose training sets overlap heavily. A paper on arXiv in early 2026 on strategic hiring under algorithmic monoculture modelled what happens when most firms in a labour market delegate their screening to correlated systems, and produced the result theorists had predicted for five years: certain candidates are now rejected by every employer in a sector because they sit in a region of candidate space that the shared screening model treats as undesirable.

This is the outcome homogenisation effect Rishi Bommasani's group formalised at NeurIPS in 2022. It has moved from thought experiment to operational reality.

A Short History of Monocultures That Ended Badly

Every generation of technologists likes to believe its tools are so new that history has nothing to say about them. Every generation is wrong.

The story of human civilisation contains a long list of monocultures that looked like efficiency gains right up until the moment they revealed themselves as fragilities. Two are worth the reread.

The first is the Irish potato crop of the 1840s. By the early nineteenth century, the peasantry of Ireland had concentrated their agriculture almost entirely on a single variety, the Irish Lumper, because it produced more calories per acre than any alternative on the poor, boggy land they farmed. The Lumper was propagated vegetatively, which meant that every potato in the ground was, genetically, a clone of every other. When Phytophthora infestans arrived from the Americas in 1845, it encountered no genetic diversity to slow it down. The blight moved through the crop the way a single-variant virus moves through an unvaccinated population.

Roughly one million people starved. Another million emigrated. A population that had stood at eight and a half million before the famine was down to four and a half million by the end of the century.

The catastrophe was not caused by the blight alone. It was caused by the combination of a uniform crop and a novel pathogen, and the uniformity was the variable humans had chosen.

The second is the financial modelling monoculture of the early 2000s. For roughly two decades, risk management inside large banks converged on a single family of statistical tools built around Value-at-Risk, often in almost identical Monte Carlo implementations, parameterised against overlapping historical windows, and regulated into near-universal adoption by Basel II. Andrew Haldane, then of the Bank of England, gave a 2009 speech at the Federal Reserve of Kansas City that remains the sharpest diagnosis of what had happened. He described the pre-crisis financial system as a monoculture in which “risk management became silo-based” and “finance became a monoculture” that “acted alike” under stress, “less disease-resistant” than a more heterogeneous system would have been.

When the underlying assumptions of the models broke in 2008, they broke everywhere at once, because everyone was running versions of the same model.

The crisis was not caused by bad modelling. It was caused by good modelling replicated until there was no dissent left in the system.

Both stories carry the same lesson. Monocultures look efficient in steady state and catastrophic in transition. They reduce small, distributed losses in the good years and concentrate them into a single correlated failure in the bad year. If you were trying to design a system that minimises variance on any given day and maximises the probability of a civilisation-scale shock, you could hardly do better than a globally adopted AI assistant trained by four companies on broadly overlapping data using broadly overlapping techniques.

The Counter-Arguments, Fairly Stated

It would be unfair to describe the situation without taking seriously the people who think the alarm is overblown. There are several of them. Some of their points are good.

The first counter-argument is that writing has always converged under the pressure of shared infrastructure. The King James Bible homogenised English prose. The Associated Press Stylebook homogenised American journalism. Microsoft Word's grammar checker, installed on half a billion machines, quietly imposed the active voice on a generation of office workers. Every technology that reduces the cost of producing acceptable text also narrows the range of text being produced. The question, the sceptics say, is not whether LLMs are narrowing the distribution, but whether the narrowing is qualitatively different from previous episodes.

The best evidence we have suggests that the convergence is faster and deeper than any previous episode. But the sceptics are right that proportionality matters.

The second counter-argument is that the monoculture is a transient phenomenon of the current training paradigm. Base models are getting better at preserving distributional diversity. Techniques like Direct Preference Optimisation, constitutional AI, and the community-alignment data-collection protocols described in the arXiv paper itself offer a plausible path to models that are both helpful and genuinely pluralistic. The problem, on this view, is not that AI is inherently homogenising; it is that the specific RLHF pipelines of 2022 to 2025 were homogenising, and the next generation of alignment methods will fix it.

Anthropic's work on constitutional pluralism and Meta's 2025 research on diversity-preserving fine-tuning both show real improvements on certain metrics. The question is whether the improvements are keeping pace with the scale of deployment. The honest answer is probably no.

The third counter-argument is the most interesting. It holds that humans were never as diverse in their expressed thought as the loss-of-diversity argument assumes. Take a population of first-year undergraduates, give them an essay prompt, and you already get substantial convergence on a handful of rhetorical templates, shared references, and predictable argumentative moves. The diversity we imagine we are losing was never there to begin with. What the LLMs are doing is making visible a pre-existing homogeneity and perhaps nudging it slightly harder in the direction it was already going.

There is something to this. Human culture has always moved through fashions, canons, and shared templates. The model-free baseline was not a paradise of idiosyncratic genius.

The fourth counter-argument is pragmatic. Even granting that LLMs reduce variance at the margin, they dramatically expand the number of people who can participate in written cognitive work. A non-native speaker in a field dominated by English-language publication can now write papers that reach the same readers as a native speaker. A dyslexic student can produce prose that reflects her thinking rather than her difficulty with spelling. A small-business owner without marketing staff can produce professional copy. The aggregate diversity of the cognitive commons might actually be higher, not lower, because more voices are in the room even if each individual voice is a bit more standardised.

The honest answer to all four arguments is that they do not dissolve the problem. They calibrate it.

The monoculture is not apocalyptic, but it is real. The convergence is not new in kind, but it is larger in scale than any previous episode. The loss of diversity is partial and might be partly reversible with better tuning methods, but the reversal is not happening at the pace the deployment is. And the expansion of participation is genuine, but it is not a substitute for the distinct kinds of cognitive variety the current systems are dampening.

We are left with a real problem that is smaller than the loudest critics claim and larger than the loudest defenders will admit.

Where Dissent Lives Now

One unsettling feature of the current moment is that the space in which intellectual dissent used to happen has been partly reabsorbed into the tools generating the mainstream.

When a student wants to argue against the received view, the assistant she uses to sharpen her argument has been trained on a corpus in which the received view is massively overrepresented, and tuned on preferences that treat the received view as the baseline of reasonableness. Her heterodox position can still be articulated. But only in the voice of the orthodoxy, with the orthodoxy's cadences and framings and preferred caveats.

The tool is helpful. It is just that the help comes in a specific register, and the register quietly pulls everything towards a centre.

This is not new in the history of dissent. Samizdat writers in the Soviet Union wrote in a Russian inherited from the official press. Heterodox economists spent the 1990s writing in the neoclassical vocabulary they were criticising. The tools of mainstream thought always bleed into the voice of people trying to escape it.

What is new is the speed and completeness of the bleed. When the tool is in every sentence, in every revision, in the autocomplete of the email drafting the pamphlet, the vocabulary of dissent has fewer places to hide.

This matters because epistemic diversity is the raw material out of which new ideas are built. Scientific revolutions, as Thomas Kuhn argued in 1962, happen when a tradition runs out of resources to solve its own puzzles and a cluster of previously marginal approaches suddenly becomes mainstream. If the marginal approaches are never articulated in the first place, because the tools of articulation bias their users towards the centre, the Kuhnian dynamic stalls. The revolutions do not come, because the conditions for revolution do not form.

This is the deepest worry in the monoculture literature, and the one hardest to test empirically, because the counterfactual is unobservable. We will not know which ideas were quietly filtered out of human discourse by the assistants of the 2020s.

We will only know what did not get said.

Interventions That Might Actually Help

The question is what to do. Nobody is sure. But interventions are being tried, and some look more promising than others.

The first category is technical. Preserving diversity during alignment is an active area of research, and the tools are improving. Regularisation penalties that explicitly reward response-distribution breadth. Constitutional methods that bake pluralism into the model's self-description. Multi-objective optimisation against competing preference signals. Community-alignment datasets built from stratified samples of global populations rather than the labelling pools of San Francisco contractors.

None of this is a complete solution, but the direction is legible. If the frontier labs decided tomorrow that response diversity was a first-class metric and weighted it at, say, twenty per cent of their tuning objective, the curves would move within months.

The question is whether they will. Response diversity is not what users say they want. Helpful answers are what they say they want. The gradient of commercial incentives does not obviously favour pluralism.

The second category is structural. Antitrust enforcement on foundation model markets is the obvious lever, and the European Commission has been exploring it since 2024, with the Digital Markets Act designation process now looking seriously at whether the largest LLM providers meet the gatekeeper thresholds. The theory of the case is that a market with four dominant providers training near-identical systems against near-identical benchmarks is not producing meaningful consumer choice. In the US, the Federal Trade Commission's 2024 inquiry into AI partnerships was a tentative step in a similar direction.

Neither jurisdiction has yet delivered a ruling that would materially shift the competitive landscape. But the conceptual groundwork is being laid.

The third category is institutional. The homogenising effects of mainstream models can be partly countered by the deliberate cultivation of distinctive alternatives. National or regional foundation model efforts, public-interest model trainings by universities or public broadcasters, domain-specific models trained on curated corpora that lie outside the standard scrape: none of these need to outcompete the frontier labs on general capability. They just need to exist, and to be good enough to be used by people who want an alternative voice.

The European EuroLLM project, Singapore's SEA-LION, Japan's Sakana work, the Allen Institute's continuing release of fully open weights and training data: these are the seeds of what might eventually be a more diverse ecosystem. Whether they grow into anything that genuinely counterbalances the big four depends on the next few years of funding and political will.

The fourth category is personal. Every writer, every coder, every thinker who uses these tools faces a daily choice that aggregates into the larger cultural effect. There is a real difference between letting the assistant do the thinking and letting it help with the thinking. It does not show up on any individual day. It shows up over months, in the divergence between users who kept their voice and users who surrendered it.

The people who have thought most seriously about this tend to converge on a discipline. Use the tool as a collaborator, not an author. Accept or reject each suggestion as a conscious choice. Reread the output and ask whether it still sounds like you. And, most importantly, write things sometimes without the tool at all, to keep the neural pathways of solo composition from atrophying.

These are small habits. They cannot fix a structural problem. But they are the only layer of defence available to the individual user right now, and they probably matter more than the user thinks.

The Diversity We Have Not Yet Lost

It is tempting to close a piece like this in the register of warning. But the warning register is part of what we are trying to escape.

The monoculture is not destiny. It is a tendency produced by a set of choices, most of which were made for defensible reasons and none of which are irreversible. The frontier labs could weight diversity higher. The regulators could act. The users could develop better habits. The open ecosystem could grow. A future model architecture could sidestep the RLHF trap in a way nobody currently sees.

The space of possible futures is wide.

What is not wide is the window. The feedback loops between models, users, training data, and cultural production are tightening. Every year in the current paradigm adds another layer of training data generated by previous models, another layer of user taste conditioned by previous outputs, another layer of convention baked into what counts as a good answer.

Monocultures are easier to prevent than to reverse, because the diversity you need to repopulate them with has to come from somewhere, and the main reservoir, the independent creative output of unassisted humans, is shrinking as a share of the total.

The Lumper potato, as any evolutionary biologist will tell you, was not an unreasonable choice in 1840. It grew well on poor land. It fed hungry people. The problem was not that the Lumper was bad.

The problem was that it was everywhere, and there was nothing else.

When the blight came, the absence of alternatives was what turned an agricultural problem into a civilisational one. The lesson is not that monocultures are always wrong. It is that they are always a bet on the future being continuous with the past, and the bet compounds over time until it is the only bet on the board.

The humans asking their assistants for help on 9 April 2026 are not doing anything wrong. They are using the tools available to them, the tools are genuinely helpful, and the sentences they produce are better than the sentences they would have produced alone. That is the seductive part. And the accurate part. And also the part that makes the aggregate picture so hard to see.

Somewhere underneath the millions of small, helpful interactions, the distribution of human expression is quietly tightening.

Whether it keeps tightening, or whether we decide to plant something else in the field alongside the Lumper, is still an open question. It may not stay open for long.

References and Sources

- Kleinberg, J., and Raghavan, M. (2021). “Algorithmic monoculture and social welfare.” Proceedings of the National Academy of Sciences, 118(22). https://www.pnas.org/doi/10.1073/pnas.2018340118

- Bommasani, R., et al. (2022). “Picking on the Same Person: Does Algorithmic Monoculture lead to Outcome Homogenization?” Proceedings of NeurIPS 2022. https://arxiv.org/abs/2211.13972

- “Cultivating Pluralism In Algorithmic Monoculture: The Community Alignment Dataset.” arXiv preprint 2507.09650 (2025, revised 2026). https://arxiv.org/abs/2507.09650

- Baek, J., and Bastani, H. (2026). “Strategic Hiring under Algorithmic Monoculture.” arXiv preprint 2502.20063. https://arxiv.org/pdf/2502.20063

- “The Homogenizing Effect of Large Language Models on Human Expression and Thought.” Trends in Cognitive Sciences (2026). https://www.cell.com/trends/cognitive-sciences/abstract/S1364-6613(26)00003-3

- Preprint version: “The Homogenizing Effect of Large Language Models on Human Expression and Thought.” arXiv:2508.01491. https://arxiv.org/abs/2508.01491

- Kobak, D., et al. (2024). “Delving into ChatGPT usage in academic writing through excess vocabulary.” arXiv:2406.07016. https://arxiv.org/abs/2406.07016

- Geng, M., et al. (2025). “Divergent LLM Adoption and Heterogeneous Convergence Paths in Research Writing.” arXiv:2504.13629. https://arxiv.org/abs/2504.13629

- Anderson, B. R., Shah, J. H., and Kreminski, M. (2024). “Homogenization Effects of Large Language Models on Human Creative Ideation.” Proceedings of the 16th ACM Conference on Creativity & Cognition. https://dl.acm.org/doi/10.1145/3635636.3656204

- Ghods, K., and Liu, P. (2025). “Evidence Against LLM Homogenization in Creative Writing.” https://kiaghods.com/assets/pdfs/LLMHomogenization.pdf

- “We're Different, We're the Same: Creative Homogeneity Across LLMs.” arXiv:2501.19361 (2025). https://arxiv.org/abs/2501.19361

- Kirk, R., et al. (2024). “Understanding the Effects of RLHF on LLM Generalisation and Diversity.” ICLR 2024. https://arxiv.org/abs/2310.06452

- “Testing English News Articles for Lexical Homogenization Due to Widespread Use of Large Language Models.” ACL 2025 Student Research Workshop. https://aclanthology.org/2025.acl-srw.95/

- “Examining linguistic shifts in academic writing before and after the launch of ChatGPT.” Scientometrics (2025). https://link.springer.com/article/10.1007/s11192-025-05341-y

- Haldane, A. G. (2009). “Rethinking the financial network.” Speech at the Financial Student Association, Amsterdam. Bank for International Settlements. https://www.bis.org/review/r090505e.pdf

- “Did Value at Risk cause the crisis it was meant to solve?” Institute for New Economic Thinking, Oxford. https://www.inet.ox.ac.uk/news/value-at-risk

- University of California Museum of Paleontology. “Monoculture and the Irish Potato Famine: cases of missing genetic variation.” Understanding Evolution. https://evolution.berkeley.edu/the-relevance-of-evolution/agriculture/monoculture-and-the-irish-potato-famine-cases-of-missing-genetic-variation/

- Wikipedia contributors. “Great Famine (Ireland).” https://en.wikipedia.org/wiki/Great_Famine_(Ireland)

- Kuhn, T. (1962). The Structure of Scientific Revolutions. University of Chicago Press.

- Wikipedia contributors. “Reinforcement learning from human feedback.” https://en.wikipedia.org/wiki/Reinforcement_learning_from_human_feedback

Tim Green UK-based Systems Theorist & Independent Technology Writer

Tim explores the intersections of artificial intelligence, decentralised cognition, and posthuman ethics. His work, published at smarterarticles.co.uk, challenges dominant narratives of technological progress while proposing interdisciplinary frameworks for collective intelligence and digital stewardship.

His writing has been featured on Ground News and shared by independent researchers across both academic and technological communities.

ORCID: 0009-0002-0156-9795 Email: tim@smarterarticles.co.uk