Black Box No More: How AI Must Learn to Explain Itself

The rejection arrives without ceremony—a terse email stating your loan application has been declined or your CV hasn't progressed to the next round. No explanation. No recourse. Just the cold finality of an algorithm's verdict, delivered with all the warmth of a server farm and none of the human empathy that might soften the blow or offer a path forward. For millions navigating today's increasingly automated world, this scenario has become frustratingly familiar. But change is coming. As governments worldwide mandate explainable AI in high-stakes decisions, the era of inscrutable digital judgement may finally be drawing to a close.

The Opacity Crisis

Sarah Chen thought she had everything right for her small business loan application. Five years of consistent revenue, excellent personal credit, and a detailed business plan for expanding her sustainable packaging company. Yet the algorithm said no. The bank's loan officer, equally puzzled, could only shrug and suggest she try again in six months. Neither Chen nor the officer understood why the AI had flagged her application as high-risk.

This scene plays out thousands of times daily across lending institutions, recruitment agencies, and insurance companies worldwide. The most sophisticated AI systems—those capable of processing vast datasets and identifying subtle patterns humans might miss—operate as impenetrable black boxes. Even their creators often cannot explain why they reach specific conclusions.

The problem extends far beyond individual frustration. When algorithms make consequential decisions about people's lives, their opacity becomes a fundamental threat to fairness and accountability. A hiring algorithm might systematically exclude qualified candidates based on factors as arbitrary as their email provider or smartphone choice, without anyone—including the algorithm's operators—understanding why.

Consider the case of recruitment AI that learned to favour certain universities not because their graduates performed better, but because historical hiring data reflected past biases. The algorithm perpetuated discrimination whilst appearing entirely objective. Its recommendations seemed data-driven and impartial, yet they encoded decades of human prejudice in mathematical form.

The stakes of this opacity crisis extend beyond individual cases of unfairness. When AI systems make millions of decisions daily about credit, employment, healthcare, and housing, their lack of transparency undermines the very foundations of democratic accountability. Citizens cannot challenge decisions they cannot understand, and regulators cannot oversee processes they cannot examine. This fundamental disconnect between the power of these systems and our ability to comprehend their workings represents one of the most pressing challenges of our digital age.

The healthcare sector illustrates the complexity of this challenge particularly well. AI systems are increasingly used to diagnose diseases, recommend treatments, and allocate resources. These decisions can literally mean the difference between life and death, yet many of the most powerful medical AI systems operate as black boxes. Doctors find themselves in the uncomfortable position of either blindly trusting AI recommendations or rejecting potentially life-saving insights because they cannot understand the reasoning behind them.

The financial services industry has perhaps felt the pressure most acutely. Credit scoring algorithms process millions of applications daily, making split-second decisions about people's financial futures. These systems consider hundreds of variables, from traditional credit history to more controversial data points like social media activity or shopping patterns. The complexity of these models makes them incredibly powerful but also virtually impossible to explain in human terms.

The Bias Amplification Machine

Modern AI systems don't simply reflect existing biases—they amplify them with unprecedented scale and speed. When trained on historical data that contains discriminatory patterns, these systems learn to replicate and magnify those biases across millions of decisions. The mechanisms are often subtle and indirect, operating through proxy variables that seem innocuous but carry discriminatory weight.

An AI system evaluating creditworthiness might never explicitly consider race or gender, yet still discriminate through seemingly neutral data points. Research has revealed that shopping patterns, social media activity, or even the time of day someone applies for a loan can serve as proxies for protected characteristics. The algorithm learns these correlations from historical data, then applies them systematically to new cases.

A particularly troubling example emerged in mortgage lending, where AI systems were found to charge higher interest rates to borrowers from certain postcodes, effectively redlining entire communities through digital means. The systems weren't programmed to discriminate, but they learned discriminatory patterns from historical lending data that reflected decades of biased human decisions. The result was systematic exclusion disguised as objective analysis.

The gig economy presents another challenge to traditional AI assessment methods. Credit scoring algorithms rely heavily on steady employment and regular income patterns. When these systems encounter the irregular earnings typical of freelancers, delivery drivers, or small business owners, they often flag these patterns as high-risk. The result is systematic exclusion of entire categories of workers from financial services, not through malicious intent but through digital inability to understand modern work patterns.

These biases become particularly pernicious because they operate at scale with the veneer of objectivity. A biased human loan officer might discriminate against dozens of applicants. A biased algorithm can discriminate against millions, all whilst maintaining the appearance of data-driven, impartial decision-making. The mathematical precision of these systems can make their biases seem more legitimate and harder to challenge than human prejudice.

The amplification effect occurs because AI systems optimise for patterns in historical data, regardless of whether those patterns reflect fair or unfair human behaviour. If past hiring managers favoured candidates from certain backgrounds, the AI learns to replicate that preference. If historical lending data shows lower approval rates for certain communities, the AI incorporates that bias into its decision-making framework. The system becomes a powerful engine for perpetuating and scaling historical discrimination.

The speed at which these biases can spread is particularly concerning. Traditional discrimination might take years or decades to affect large populations. AI bias can impact millions of people within months of deployment. A biased hiring algorithm can filter out qualified candidates from entire demographic groups before anyone notices the pattern. By the time the bias is discovered, thousands of opportunities may have been lost, and the discriminatory effects may have rippled through communities and economies.

The subtlety of modern AI bias makes it especially difficult to detect and address. Unlike overt discrimination, AI bias often operates through complex interactions between multiple variables. A system might not discriminate based on any single factor, but the combination of several seemingly neutral variables might produce discriminatory outcomes. This complexity makes it nearly impossible to identify bias without sophisticated analysis tools and expertise.

The Regulatory Awakening

Governments worldwide are beginning to recognise that digital accountability cannot remain optional. The European Union's Artificial Intelligence Act represents the most comprehensive attempt yet to regulate high-risk AI applications, with specific requirements for transparency and explainability in systems that affect fundamental rights. The legislation categorises AI systems by risk level, with the highest-risk applications—those used in hiring, lending, and law enforcement—facing stringent transparency requirements.

Companies deploying such systems must be able to explain their decision-making processes and demonstrate that they've tested for bias and discrimination. The Act requires organisations to maintain detailed documentation of their AI systems, including training data, testing procedures, and risk assessments. For systems that affect individual rights, companies must provide clear explanations of how decisions are made and what factors influence outcomes.

In the United States, regulatory pressure is mounting from multiple directions. The Equal Employment Opportunity Commission has issued guidance on AI use in hiring, whilst the Consumer Financial Protection Bureau is scrutinising lending decisions made by automated systems. Several states are considering legislation that would require companies to disclose when AI is used in hiring decisions and provide explanations for rejections. New York City has implemented local laws requiring bias audits for hiring algorithms, setting a precedent for municipal-level AI governance.

The regulatory momentum reflects a broader shift in how society views digital power. The initial enthusiasm for AI's efficiency and objectivity is giving way to sober recognition of its potential for harm. Policymakers are increasingly unwilling to accept “the algorithm decided” as sufficient justification for consequential decisions that affect citizens' lives and livelihoods.

This regulatory pressure is forcing a fundamental reckoning within the tech industry. Companies that once prised complexity and accuracy above all else must now balance performance with explainability. The most sophisticated neural networks, whilst incredibly powerful, may prove unsuitable for applications where transparency is mandatory. This shift is driving innovation in explainable AI techniques and forcing organisations to reconsider their approach to automated decision-making.

The global nature of this regulatory awakening means that multinational companies cannot simply comply with the lowest common denominator. As different jurisdictions implement varying requirements for AI transparency, organisations are increasingly designing systems to meet the highest standards globally, rather than maintaining separate versions for different markets.

The enforcement mechanisms being developed alongside these regulations are equally important. The EU's AI Act includes substantial fines for non-compliance, with penalties reaching up to 6% of global annual turnover for the most serious violations. These financial consequences are forcing companies to take transparency requirements seriously, rather than treating them as optional guidelines.

The regulatory landscape is also evolving to address the technical challenges of AI explainability. Recognising that perfect transparency may not always be possible or desirable, some regulations are focusing on procedural requirements rather than specific technical standards. This approach allows for innovation in explanation techniques whilst ensuring that companies take responsibility for understanding and communicating their AI systems' behaviour.

The Performance Paradox

At the heart of the explainable AI challenge lies a fundamental tension: the most accurate algorithms are often the least interpretable. Simple decision trees and linear models can be easily understood and explained, but they typically cannot match the predictive power of complex neural networks or ensemble methods. This creates a dilemma for organisations deploying AI systems in critical applications.

The trade-off between accuracy and interpretability varies dramatically across different domains and use cases. In medical diagnosis, a more accurate but less explainable AI might save lives, even if doctors cannot fully understand its reasoning. The potential benefit of improved diagnostic accuracy might outweigh the costs of reduced transparency. However, in hiring or lending, the inability to explain decisions may violate legal requirements and perpetuate discrimination, making transparency a legal and ethical necessity rather than a nice-to-have feature.

Some researchers argue that this trade-off represents a false choice, suggesting that truly effective AI systems should be both accurate and explainable. They point to cases where complex models have achieved high performance through spurious correlations—patterns that happen to exist in training data but don't reflect genuine causal relationships. Such models may appear accurate during testing but fail catastrophically when deployed in real-world conditions where those spurious patterns no longer hold.

The debate reflects deeper questions about the nature of intelligence and decision-making. Human experts often struggle to articulate exactly how they reach conclusions, relying on intuition and pattern recognition that operates below conscious awareness. Should we expect more from AI systems than we do from human decision-makers? The answer may depend on the scale and consequences of the decisions being made.

The performance paradox also highlights the importance of defining what we mean by “performance” in AI systems. Pure predictive accuracy may not be the most important metric when systems are making decisions about people's lives. Fairness, transparency, and accountability may be equally important measures of system performance, particularly in high-stakes applications where the social consequences of decisions matter as much as their technical accuracy. This broader view of performance is driving the development of new evaluation frameworks that consider multiple dimensions of AI system quality beyond simple predictive metrics.

The challenge becomes even more complex when considering the dynamic nature of real-world environments. A model that performs well in controlled testing conditions may behave unpredictably when deployed in the messy, changing world of actual applications. Explainability becomes crucial not just for understanding current decisions, but for predicting and managing how systems will behave as conditions change over time.

The performance paradox is also driving innovation in AI architecture and training methods. Researchers are developing new approaches that build interpretability into models from the ground up, rather than adding it as an afterthought. These techniques aim to preserve the predictive power of complex models whilst making their decision-making processes more transparent and understandable.

The Trust Imperative

Beyond regulatory compliance, explainability serves a crucial role in building trust between AI systems and their human users. Loan officers, hiring managers, and other professionals who rely on AI recommendations need to understand and trust these systems to use them effectively. Without this understanding, human operators may either blindly follow AI recommendations or reject them entirely, neither of which leads to optimal outcomes.

Dr. Sarah Rodriguez, who studies human-AI interaction in healthcare settings, observes that doctors are more likely to follow AI recommendations when they understand the reasoning behind them. “It's not enough for the AI to be right,” she explains. “Practitioners need to understand why it's right, so they can identify when it might be wrong.” This principle extends beyond healthcare to any domain where humans and AI systems work together in making important decisions.

A hiring manager who doesn't understand why an AI system recommends certain candidates cannot effectively evaluate those recommendations or identify potential biases. The result is either blind faith in digital decisions or wholesale rejection of AI assistance. Neither outcome serves the organisation or the people affected by its decisions. Effective human-AI collaboration requires transparency that enables human operators to understand, verify, and when necessary, override AI recommendations.

Trust also matters critically for the people affected by AI decisions. When someone's loan application is rejected or job application filtered out, they deserve to understand why. This understanding serves multiple purposes: it helps people improve future applications, enables them to identify and challenge unfair decisions, and maintains their sense of agency in an increasingly automated world.

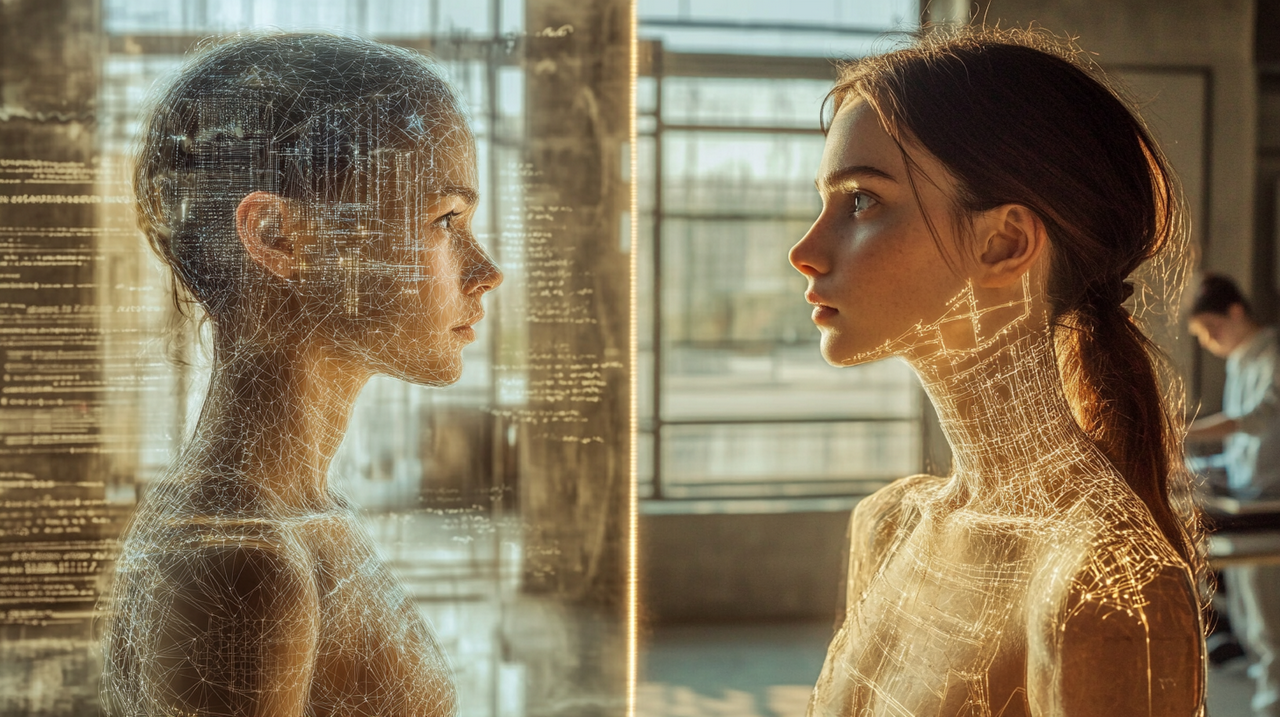

The absence of explanation can feel profoundly dehumanising. People reduced to data points, judged by inscrutable algorithms, lose their sense of dignity and control. Explainable AI offers a path back to more humane automated decision-making, where people understand how they're being evaluated and what they can do to improve their outcomes. This transparency is not just about fairness—it's about preserving human dignity in an age of increasing automation.

Trust in AI systems also depends on their consistency and reliability over time. When people can understand how decisions are made, they can better predict how changes in their circumstances might affect future decisions. This predictability enables more informed decision-making and helps people maintain a sense of control over their interactions with automated systems.

The trust imperative extends beyond individual interactions to broader social acceptance of AI systems. Public trust in AI technology depends partly on people's confidence that these systems are fair, transparent, and accountable. Without this trust, society may reject beneficial AI applications, limiting the potential benefits of these technologies. Building and maintaining public trust requires ongoing commitment to transparency and explainability across all AI applications.

The relationship between trust and explainability is complex and context-dependent. In some cases, too much information about AI decision-making might actually undermine trust, particularly if the explanations reveal the inherent uncertainty and complexity of automated decisions. The challenge is finding the right level of explanation that builds confidence without overwhelming users with unnecessary technical detail.

Technical Solutions and Limitations

The field of explainable AI has produced numerous techniques for making black box algorithms more interpretable. These approaches generally fall into two categories: intrinsically interpretable models and post-hoc explanation methods. Each approach has distinct advantages and limitations that affect their suitability for different applications.

Intrinsically interpretable models are designed to be understandable from the ground up. Decision trees, for instance, follow clear if-then logic that humans can easily follow. Linear models show exactly how each input variable contributes to the final decision. These models sacrifice some predictive power for the sake of transparency, but they provide genuine insight into how decisions are made.

Post-hoc explanation methods attempt to explain complex models after they've been trained. Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) generate explanations by analysing how changes to input variables affect model outputs. These methods can provide insights into black box models without requiring fundamental changes to their architecture.

However, current explanation techniques have significant limitations that affect their practical utility. Post-hoc explanations may not accurately reflect how models actually make decisions, instead providing plausible but potentially misleading narratives. The explanations generated by these methods are approximations that may not capture the full complexity of model behaviour, particularly in edge cases or unusual scenarios.

Even intrinsically interpretable models can become difficult to understand when they involve hundreds of variables or complex interactions between features. A decision tree with thousands of branches may be theoretically interpretable, but practically incomprehensible to human users. The challenge is not just making models explainable in principle, but making them understandable in practice.

Moreover, different stakeholders may need different types of explanations for the same decision. A data scientist might want detailed technical information about feature importance and model confidence. A loan applicant might prefer a simple explanation of what they could do differently to improve their chances. A regulator might focus on whether the model treats different demographic groups fairly. Developing explanation systems that can serve multiple audiences simultaneously remains a significant challenge.

The quality and usefulness of explanations also depend heavily on the quality of the underlying data and model. If a model is making decisions based on biased or incomplete data, even perfect explanations will not make those decisions fair or appropriate. Explainability is necessary but not sufficient for creating trustworthy AI systems.

Recent advances in explanation techniques are beginning to address some of these limitations. Counterfactual explanations, for example, show users how they could change their circumstances to achieve different outcomes. These explanations are often more actionable than traditional feature importance scores, giving people concrete steps they can take to improve their situations.

Attention mechanisms in neural networks provide another promising approach to explainability. These techniques highlight which parts of the input data the model is focusing on when making decisions, providing insights into the model's reasoning process. While not perfect, attention mechanisms can help users understand what information the model considers most important.

The development of explanation techniques is also being driven by specific application domains. Medical AI systems, for example, are developing explanation methods that align with how doctors think about diagnosis and treatment. Financial AI systems are creating explanations that comply with regulatory requirements whilst remaining useful for business decisions.

The Human Element

As AI systems become more explainable, they reveal uncomfortable truths about human decision-making. Many of the biases encoded in AI systems originate from human decisions reflected in training data. Making AI more transparent often means confronting the prejudices and shortcuts that humans have used for decades in hiring, lending, and other consequential decisions.

This revelation can be deeply unsettling for organisations that believed their human decision-makers were fair and objective. Discovering that an AI system has learned to discriminate based on historical hiring data forces companies to confront their own past biases. The algorithm becomes a mirror, reflecting uncomfortable truths about human behaviour that were previously hidden or ignored.

The response to these revelations varies widely across organisations and industries. Some embrace the opportunity to identify and correct historical biases, using AI transparency as a tool for promoting fairness and improving decision-making processes. These organisations view explainable AI as a chance to build more equitable systems and create better outcomes for all stakeholders.

Others resist these revelations, preferring the comfortable ambiguity of human decision-making to the stark clarity of digital bias. This resistance highlights a paradox in demands for AI explainability. People often accept opaque human decisions whilst demanding transparency from AI systems. A hiring manager's “gut feeling” about a candidate goes unquestioned, but an AI system's recommendation requires detailed justification.

The double standard may reflect legitimate concerns about scale and accountability. Human biases, whilst problematic, operate at limited scale and can be addressed through training and oversight. A biased human decision-maker might affect dozens of people. A biased algorithm can affect millions, making the stakes of bias much higher in automated systems.

However, the comparison also reveals the potential benefits of explainable AI. While human decision-makers may be biased, their biases are often invisible and difficult to address systematically. AI systems, when properly designed and monitored, can make their decision-making processes transparent and auditable. This transparency creates opportunities for identifying and correcting biases that might otherwise persist indefinitely in human decision-making.

The integration of explainable AI into human decision-making processes also raises questions about the appropriate division of labour between humans and machines. In some cases, AI systems may be better at making fair and consistent decisions than humans, even when those decisions cannot be fully explained. In other cases, human judgment may be essential for handling complex or unusual situations that fall outside the scope of automated systems.

The human element in explainable AI extends beyond bias detection to questions of trust and accountability. When AI systems make mistakes, who is responsible? How do we balance the benefits of automated decision-making with the need for human oversight and control? These questions become more pressing as AI systems become more powerful and widespread, making explainability not just a technical requirement but a fundamental aspect of human-AI collaboration.

Real-World Implementation

Several companies are pioneering approaches to explainable AI in high-stakes applications, with financial services firms leading the way due to intense regulatory scrutiny. One major bank replaced its complex neural network credit scoring system with a more interpretable ensemble of decision trees, providing clear explanations for every decision whilst helping identify and eliminate bias. In recruitment, companies have developed AI systems that revealed excessive weight on university prestige, leading to adjustments that created more diverse candidate pools.

However, implementation hasn't been without challenges. These explainable systems require more computational resources and maintenance than their black box predecessors. Training staff to understand and use the explanations effectively required significant investment in education and change management. The transition also revealed gaps in data quality and consistency that had been masked by the complexity of previous systems.

The insurance industry has found particular success with explainable AI approaches. Several major insurers now provide customers with detailed explanations of their premiums, along with specific recommendations for reducing costs. This transparency has improved customer satisfaction and trust, whilst also encouraging behaviours that benefit both insurers and policyholders. The collaborative approach has led to better risk assessment and more sustainable business models.

Healthcare organisations are taking more cautious approaches to explainable AI, given the life-and-death nature of medical decisions. Many are implementing hybrid systems where AI provides recommendations with explanations, but human doctors retain final decision-making authority. These systems are proving particularly valuable in diagnostic imaging, where AI can highlight areas of concern whilst explaining its reasoning to radiologists.

The technology sector itself is grappling with explainability requirements in hiring and performance evaluation. Several major tech companies have redesigned their recruitment algorithms to provide clear explanations for candidate recommendations. These systems have revealed surprising biases in hiring practices, leading to significant changes in recruitment strategies and improved diversity outcomes.

Government agencies are also beginning to implement explainable AI systems, particularly in areas like benefit determination and regulatory compliance. These implementations face unique challenges, as government decisions must be not only explainable but also legally defensible and consistent with policy objectives. The transparency requirements are driving innovation in explanation techniques specifically designed for public sector applications.

The Global Perspective

Different regions are taking varied approaches to AI transparency and accountability, creating a complex landscape for multinational companies deploying AI systems. The European Union's comprehensive regulatory framework contrasts sharply with the more fragmented approach in the United States, where regulation varies by state and sector. In contrast, China has introduced AI governance principles that emphasise transparency and accountability, though implementation and enforcement remain unclear. Meanwhile, countries like Singapore and Canada are developing their own frameworks that balance innovation with protection.

These regulatory differences reflect different cultural attitudes towards privacy, transparency, and digital authority. European emphasis on individual rights and data protection has produced strict transparency requirements. American focus on innovation and market freedom has resulted in more sector-specific regulation. Asian approaches often balance individual rights with collective social goals, creating different priorities for AI governance.

The variation in approaches is creating challenges for companies operating across multiple jurisdictions. A hiring algorithm that meets transparency requirements in one country may violate regulations in another. Companies are increasingly designing systems to meet the highest standards globally, rather than maintaining separate versions for different markets. This convergence towards higher standards is driving innovation in explainable AI techniques and pushing the entire industry towards greater transparency.

International cooperation on AI governance is beginning to emerge, with organisations like the OECD and UN developing principles for responsible AI development and deployment. These efforts aim to create common standards that can facilitate international trade and cooperation whilst protecting individual rights and promoting fairness. The challenge is balancing the need for common standards with respect for different cultural and legal traditions.

The global perspective on explainable AI is also being shaped by competitive considerations. Countries that develop strong frameworks for trustworthy AI may gain advantages in attracting investment and talent, whilst also building public confidence in AI technologies. This dynamic is creating incentives for countries to develop comprehensive approaches to AI governance that balance innovation with protection.

Economic Implications

The shift towards explainable AI carries significant economic implications for organisations across industries. Companies must invest in new technologies, retrain staff, and potentially accept reduced performance in exchange for transparency. These costs are not trivial, particularly for smaller organisations with limited resources. The transition requires not just technical changes but fundamental shifts in how organisations approach automated decision-making.

However, the economic benefits of explainable AI may outweigh the costs in many applications. Transparent systems can help companies identify and eliminate biases that lead to poor decisions and legal liability. They can improve customer trust and satisfaction, leading to better business outcomes. They can also facilitate regulatory compliance, avoiding costly fines and restrictions that may result from opaque decision-making processes.

The insurance industry provides a compelling example of these economic benefits. Insurers using explainable AI to assess risk can provide customers with detailed explanations of their premiums, along with specific recommendations for reducing costs. This transparency builds trust and encourages customers to take actions that benefit both themselves and the insurer. The result is a more collaborative relationship between insurers and customers, rather than an adversarial one.

Similarly, banks using explainable lending algorithms can help rejected applicants understand how to improve their creditworthiness, potentially turning them into future customers. The transparency creates value for both parties, rather than simply serving as a regulatory burden. This approach can lead to larger customer bases and more sustainable business models over time.

The economic implications extend beyond individual companies to entire industries and economies. Countries that develop strong frameworks for explainable AI may gain competitive advantages in attracting investment and talent. The development of explainable AI technologies is creating new markets and opportunities for innovation, whilst also imposing costs on organisations that must adapt to new requirements.

The labour market implications of explainable AI are also significant. As AI systems become more transparent and accountable, they may become more trusted and widely adopted, potentially accelerating automation in some sectors. However, the need for human oversight and interpretation of AI explanations may also create new job categories and skill requirements.

The investment required for explainable AI is driving consolidation in some sectors, as smaller companies struggle to meet the technical and regulatory requirements. This consolidation may reduce competition in the short term, but it may also accelerate the development and deployment of more sophisticated explanation technologies.

Looking Forward

The future of explainable AI will likely involve continued evolution of both technical capabilities and regulatory requirements. New explanation techniques are being developed that provide more accurate and useful insights into complex models. Researchers are exploring ways to build interpretability into AI systems from the ground up, rather than adding it as an afterthought. These advances may eventually resolve the tension between accuracy and explainability that currently constrains many applications.

Regulatory frameworks will continue to evolve as policymakers gain experience with AI governance. Early regulations may prove too prescriptive or too vague, requiring adjustment based on real-world implementation. The challenge will be maintaining innovation whilst ensuring accountability and fairness. International coordination may become increasingly important as AI systems operate across borders and jurisdictions.

The biggest changes may come from shifting social expectations rather than regulatory requirements. As people become more aware of AI's role in their lives, they may demand greater transparency and control over digital decisions. The current acceptance of opaque AI systems may give way to expectations for explanation and accountability that exceed even current regulatory requirements.

Professional standards and industry best practices will play crucial roles in this transition. Just as medical professionals have developed ethical guidelines for clinical practice, AI practitioners may need to establish standards for transparent and accountable decision-making. These standards could help organisations navigate the complex landscape of AI governance whilst promoting innovation and fairness.

The development of explainable AI is also likely to influence the broader relationship between humans and technology. As AI systems become more transparent and accountable, they may become more trusted and widely adopted. This could accelerate the integration of AI into society whilst also ensuring that this integration occurs in ways that preserve human agency and dignity.

The technical evolution of explainable AI is likely to be driven by advances in several areas. Natural language generation techniques may enable AI systems to provide explanations in plain English that non-technical users can understand. Interactive explanation systems may allow users to explore AI decisions in real-time, asking questions and receiving immediate responses. Visualisation techniques may make complex AI reasoning processes more intuitive and accessible.

The integration of explainable AI with other emerging technologies may also create new possibilities. Blockchain technology could provide immutable records of AI decision-making processes, enhancing accountability and trust. Virtual and augmented reality could enable immersive exploration of AI reasoning, making complex decisions more understandable through interactive visualisation.

The Path to Understanding

The movement towards explainable AI represents more than a technical challenge or regulatory requirement—it's a fundamental shift in how society relates to digital power. For too long, people have been subject to automated decisions they cannot understand or challenge. The black box era, where efficiency trumped human comprehension, is giving way to demands for transparency and accountability that reflect deeper values about fairness and human dignity.

This transition will not be easy or immediate. Technical challenges remain significant, and the trade-offs between performance and explainability are real. Regulatory frameworks are still evolving, and industry practices are far from standardised. The economic costs of transparency are substantial, and the benefits are not always immediately apparent. Yet the direction of change seems clear, driven by the convergence of regulatory pressure, technical innovation, and social demand.

The stakes are high because AI systems increasingly shape fundamental aspects of human life—access to credit, employment opportunities, healthcare decisions, and more. The opacity of these systems undermines human agency and democratic accountability. Making them explainable is not just a technical nicety but a requirement for maintaining human dignity in an age of increasing automation.

The path forward requires collaboration between technologists, policymakers, and society as a whole. Technical solutions alone cannot address the challenges of AI transparency and accountability. Regulatory frameworks must be carefully designed to promote innovation whilst protecting individual rights. Social institutions must adapt to the realities of AI-mediated decision-making whilst preserving human values and agency.

The promise of explainable AI extends beyond mere compliance with regulations or satisfaction of curiosity. It offers the possibility of AI systems that are not just powerful but trustworthy, not just efficient but fair, not just automated but accountable. These systems could help us make better decisions, identify and correct biases, and create more equitable outcomes for all members of society.

The challenges are significant, but so are the opportunities. As we stand at the threshold of an age where AI systems make increasingly consequential decisions about human lives, the choice between opacity and transparency becomes a choice between digital authoritarianism and democratic accountability. The technical capabilities exist to build explainable AI systems. The regulatory frameworks are emerging to require them. The social demand for transparency is growing stronger.

As explainable AI becomes mandatory rather than optional, we may finally begin to understand the automated decisions that shape our lives. The terse dismissals may still arrive, but they will come with explanations, insights, and opportunities for improvement. The algorithms will remain powerful, but they will no longer be inscrutable. In a world increasingly governed by code, that transparency may be our most important safeguard against digital tyranny.

The black box is finally opening. What we find inside may surprise us, challenge us, and ultimately make us better. But first, we must have the courage to look.

References and Further Information

Ethical and regulatory challenges of AI technologies in healthcare: A narrative review – PMC, National Center for Biotechnology Information

The Role of AI in Hospitals and Clinics: Transforming Healthcare – PMC, National Center for Biotechnology Information

Research Spotlight: Walter W. Zhang on the 'Black Box' of AI Decision-Making – Mack Institute, Wharton School, University of Pennsylvania

When Algorithms Judge Your Credit: Understanding AI Bias in Financial Services – Accessible Law, University of Texas at Dallas

Bias detection and mitigation: Best practices and policies to reduce consumer harms – Brookings Institution

European Union Artificial Intelligence Act – Official Journal of the European Union

Explainable Artificial Intelligence (XAI): Concepts, taxonomies, opportunities and challenges toward responsible AI – Information Fusion Journal

The Mythos of Model Interpretability – Communications of the ACM

US Equal Employment Opportunity Commission Technical Assistance Document on AI and Employment Discrimination

Consumer Financial Protection Bureau Circular on AI and Fair Lending

Transparency and accountability in AI systems – Frontiers in Artificial Intelligence

AI revolutionising industries worldwide: A comprehensive overview – ScienceDirect

LIME: Local Interpretable Model-agnostic Explanations – Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining

SHAP: A Unified Approach to Explaining Machine Learning Model Predictions – Advances in Neural Information Processing Systems

Counterfactual Explanations without Opening the Black Box – Proceedings of the 2018 AAAI/ACM Conference on AI, Ethics, and Society

Tim Green UK-based Systems Theorist & Independent Technology Writer

Tim explores the intersections of artificial intelligence, decentralised cognition, and posthuman ethics. His work, published at smarterarticles.co.uk, challenges dominant narratives of technological progress while proposing interdisciplinary frameworks for collective intelligence and digital stewardship.

His writing has been featured on Ground News and shared by independent researchers across both academic and technological communities.

ORCID: 0000-0002-0156-9795 Email: tim@smarterarticles.co.uk

#HumanInTheLoop #Explainability #AlgorithmTransparency #AIAccountability